This article is more than 1 year old

Boffins demo 'memcomputer', plot von Neumann's retirement

Memory and processing in the same transistor

Shuffling stuff between memory and the CPU is one of the biggest wasters of time and electricity inside, so boffins from the University of California, San Diego, have demonstrated a prototype “memcomputer” that has memory and compute in the same place.

The memcomputer concept has been around since the 1970s, as noted by Popular Mechanics.

As well as trying to use the same physical units both for storage and memory, the memcomputer concept proposes that the act of processing could be used as a kind of memory.

That's what Massimiliano Di Ventra and his collaborators (Fabio Lorenzo Traversa, Chiara Ramella, and Fabrizio Bonani) have published in Science, using a Fourier Transform as their test case.

What Di Ventra has done is to craft a transistor that acts like a gate for processing, but that also uses a secondary property like electrical resistance for storage. As explained to Pop Sci, varying the amount of charge moving through the transistor can be used to change the resistance property, which remains stored even when the memcomputer is powered off.

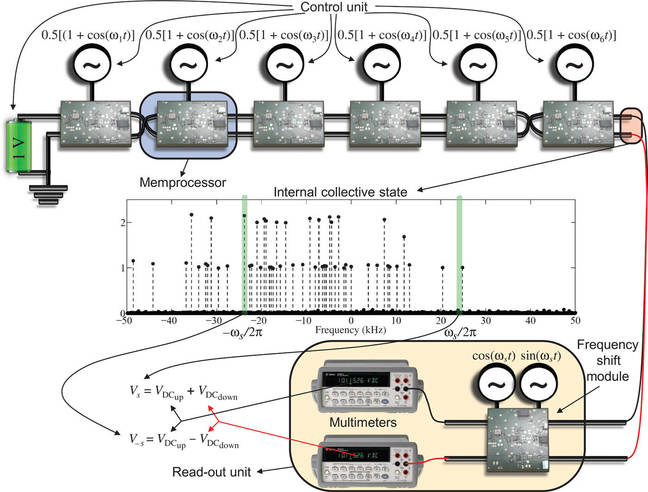

The UCSD boffins memcomputer setup for running a Fourier Transform

As well as getting rid of data-shuffling between processor and memory, there's another aspect to the architecture that would fit under the heading “huge if true”: it can represent multiple states simultaneously, speeding up solutions to the kinds of problems von Neumann architectures can only handle iteratively.

Hence “how many numbers in this set add up to ten?” is a question that quickly takes up a lot of compute resources – all you need is to make the set large enough.

In a conference abstract, Di Ventra says the proposed “Universal Memcomputing Machine” is Turing-complete, but has a characteristic similar to (but easier to achieve) quantum computing: collective states.

“[T]he presence of collective states in UMMs … allows them to solve NP-complete problems in polynomial time using polynomial resources,” that note claims.

The Science abstract claims that “We show an experimental demonstration of an actual memcomputing architecture that solves the NP-complete version of the subset sum problem in only one step and is composed of a number of memprocessors that scales linearly with the size of the problem”.

In other words, the kind of problem that a standard von Neumann-architecture computer can only solve with a step-by-step iteration, the memcomputer can accelerate.

For a problem that might need 10 trillion iterations in today's architecture, the UCSD boffins reckon a memcomputer might only need 10 million iterations.

And best of all: they note that they've fabricated their demonstration machine using standard microelectronics, and room temperature operation.

However, the memcomputers are, like quantum computers, limited by noise “and will thus require error-correcting codes to scale to an arbitrary number of memprocessors”. ®