This article is more than 1 year old

The gift of Grace: COBOL's odyssey from Vietnam to the Square Mile

Add 50, 1964 GIVING Anniversary

Cobol is the language most associated with mainframes, especially the IBM System 360 whose 50th anniversary is being celebrated or at least commemorated this week. But when COBOL was first spawned in the mid-1950s, it wasn’t intended for programmers.

It was aimed instead at “accountants and business managers” – basically a Stone Age Excel that turned into something quite different. In fact IBM spent serious effort fighting against COBOL becoming a standard for both good and bad reasons.

The ironies run deep here: Grace Hopper is on the wall of every school as a “role model” for women in programming despite being a prime mover in what is widely regarded as the worst mistake in computing history. This is due to its lack of almost every feature that good programming languages have as well as being absurdly verbose, as in:

ADD First, Second GIVING Sum COBOL

It was also instrumental in the US armed forces work in Vietnam, which also is not universally regarded as a success. 1950s IBM (or 1990s IBM for that matter) didn’t like any standard it did not control and use to leverage its vicelike grip on the whole IT market, which was centred on mainframes.

But once the Codasyl bandwagon started rolling, IBM got on board with alacrity, and the irony turned full circle: with IBM now being a big player in open source.

From the '50s until the '80s, computer firms made the bulk of their money from hardware and developed software like Cobol, CICS, DB2, VMS as ways of making the hardware usable and therefore creating more demand for more horsepower. It did so to the extent that much of the commercial justification for IBM PCs was that each “smart terminal” increased load on the "host" (IBM-ese for mainframe) by about half a MIP in profitable hardware terms. Today, the two remaining Cobol compiler teams actually use much the same techniques for optimising Cobol as for C++.

Real Programmers, of course, used Assembler and Fortran, because compilers were pitifully inefficient even though instruction sets were simpler. Processors did not require you to re-order instructions for best performance and RISC wasn’t even sci-fi yet.

If you can remember COBOL in the '60s, you probably were there as it quickly became the language of choice for online data processing for handling payments, stock control and real-time air defence systems. No I’m not making that up. We scared the Russians so much with our sophisticated COBOL-based defence that they never dared attack and it may or may not be coincidence that President Putin started throwing his weight around just as we retired them. They held on for decades because, despite the sneering of the cool kids (like me) who pushed C & C++ and the Quiche Eating Pascal/Java fanbois, the mainframe Cobol stuff focused on being rock solid rather than interesting; ask yourself if you want a more reliable air traffic control system or one that’s more exciting?

Pay and status reflected the different levels of complexity, with Assembler programmers earning more than COBOLers and mainframe people earning more than minis. That’s on average of course, but for most of the period 1955 to 1995, the bigger the machine you worked on, the more you got paid, Mainframe > Mini >PC. Even when most of us moved to Client/Server during the '80s and '90s, running big database systems was one of the best gigs in town... if you could stand the boredom.

The S/360 had made programming less of an experimental science and something that at least called itself a form of engineering (something that still hasn’t fully happened). This was because the S/360 was designed, rather than “we need a thing to do that, so we’ll stick it in this part” and gave rise to the most important book ever written on Software Engineering, The Mythical Man-Month, a book of essays that is so good that not only have four of my copies been stolen by lesser programmers, but I’ve spent my own money buying replacements.

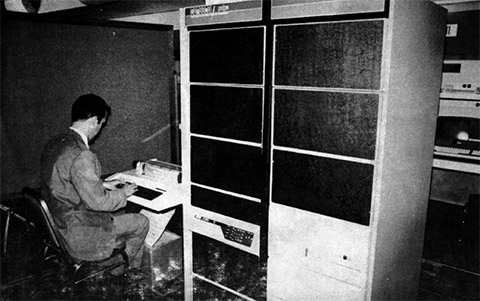

A Portable Computer: circa 1977

Cobol had no problem embracing and crushing the dreams of the '70s and minicomputer makers like PDP-11-maker DEC, Prime Computer, DG, IBM and even Wang Lab. Each of these players had a Cobol of their own, each different enough to make it easy to write but hard to port code. This created a lock-in that enriched the manufacturers, but in the long run the only one left standing in the same sector is IBM.

That’s created a substantial niche that Micro Focus has done really rather well out of, providing path to a viable hardware and operating system platforms for many corporates. So why not just chuck it away and move to a language younger than the people writing it? You'd think that because as an ITPro most of your work is in changing things, but those under the delusion that the firm exists to make money are more than happy to leave well enough alone.

Cobol Developers did rather well out of the big expansion of financial services in the 1980s. Although PCs were popping up on desks, they lacked muscle, the right applications and reliability, so VAXes became so hot that one over-excited headhunter decided that because my mates and I did VAX C, this was much the same thing and referred to me as a “shit hot Cobol ace” and somehow persuaded Chase Manhattan Bank to fly us out to New York, sight unseen. The rates offered were so good that for a good long minute I was tempted to spend the flight with the manuals on my knees, but a rare outbreak of honesty and being part of the “Cobol will die soon” consensus kept me out of harm’s way.